How to Conduct an AI Risk Assessment for Your Company in 2026

In today’s fast-paced business world, artificial intelligence is everywhere. From chatbots handling customer service to algorithms predicting market trends, AI is changing how companies operate. But with all these advancements come potential pitfalls. That’s where conduct an AI risk assessment becomes essential. It helps you spot issues before they turn into big problems, keeping your business safe and compliant.

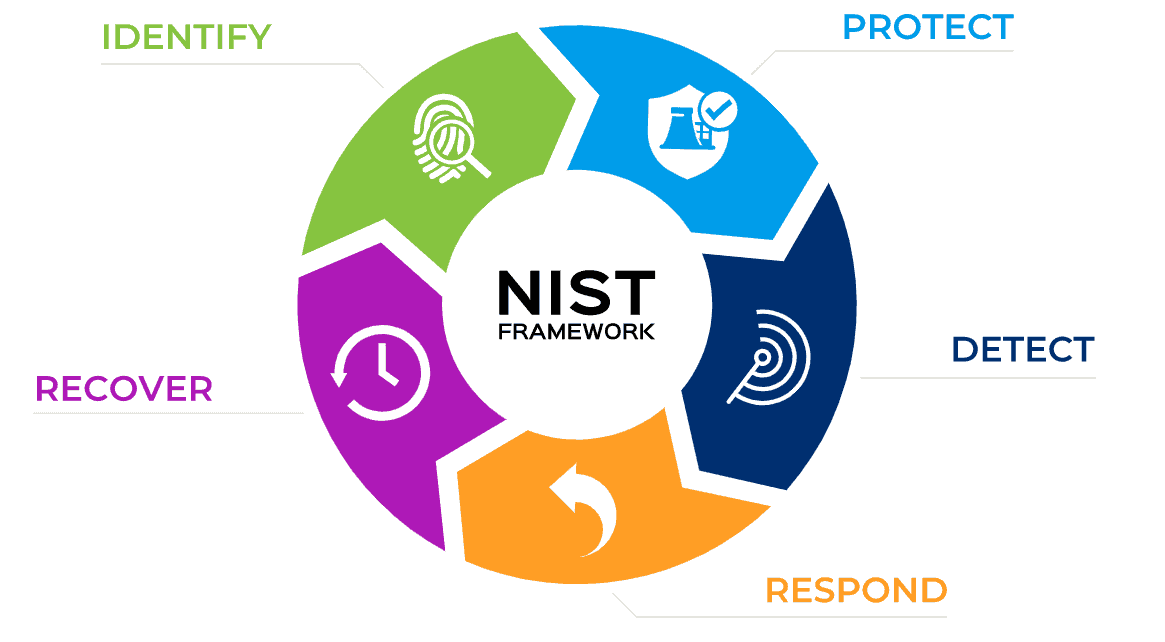

As we move through 2026, regulations like the EU AI Act are in full swing, and frameworks from organizations such as NIST are guiding companies on best practices. If you’re running a company in India or anywhere else, staying ahead means understanding these risks and managing them effectively. This guide will walk you through the process step by step, so you can protect your operations while making the most of AI.

Image credit: Splunk

Understanding AI Risks in 2026

AI isn’t just a buzzword anymore; it’s a core part of many businesses. But what exactly are the risks? In 2026, we’re seeing more sophisticated threats. For starters, there’s bias in AI systems. If your training data isn’t diverse, your AI might make unfair decisions, like in hiring tools that favor certain groups over others.

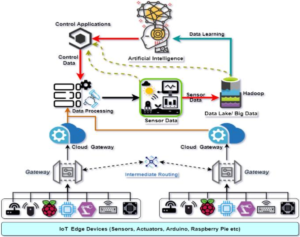

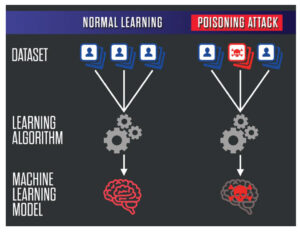

Then there’s data privacy. With laws like GDPR and emerging Indian data protection rules, mishandling personal information can lead to hefty fines. Cybersecurity is another big one—AI systems can be hacked, leading to data breaches or manipulated outputs. Think about deepfakes or AI-generated misinformation that could damage your brand.

Operational risks are on the rise too. If your AI model drifts over time, meaning it performs worse as real-world data changes, that could affect everything from supply chain predictions to customer recommendations. And don’t forget ethical concerns, like job displacement or lack of transparency in how AI makes decisions.

According to recent reports, over 70% of companies using AI have faced at least one risk incident in the past year. That’s why conducting an AI risk assessment isn’t optional—it’s a must for survival.

For more on how AI ethics play into this, check out our guide to AI ethics in business.

Why Your Company Needs to Conduct an AI Risk Assessment Now

Imagine launching a new AI-powered product only to find out it violates new regulations, costing you millions in penalties. Or worse, a security flaw exposes customer data, eroding trust overnight. These aren’t hypotheticals; they’re happening to businesses worldwide.

Conducting an AI risk assessment helps you identify these issues early. It ensures compliance with laws like the EU AI Act, which went into effect fully this year, categorizing AI systems by risk levels and requiring assessments for high-risk ones. In the US, states like California have their own mandates, and globally, frameworks like NIST’s AI RMF are becoming standard.

Beyond compliance, it’s about building resilience. A solid assessment can improve your AI’s performance, reduce costs from errors, and even give you a competitive edge by showing customers you’re responsible with tech. Plus, investors and partners are increasingly demanding proof of AI governance.

If you’re just starting with AI, this is the perfect time. Even small companies can benefit—it’s not just for tech giants. Link to external resource: Learn more about the NIST AI Risk Management Framework for detailed guidance.

Key Frameworks to Guide Your AI Risk Assessment

Before diving into the steps, let’s talk frameworks. These are like roadmaps that make conducting an AI risk assessment structured and effective.

The NIST AI Risk Management Framework is one of the best. It breaks things down into four core functions: Govern, Map, Measure, and Manage. It’s flexible, works for any company size, and focuses on trustworthiness in AI.

Then there’s the EU AI Act. It classifies AI into unacceptable, high, limited, and minimal risk categories. For high-risk systems, like those in healthcare or finance, you need conformity assessments and ongoing monitoring.

ISO/IEC 42001 is another solid one, offering standards for AI management systems. It includes controls for risk treatment and emphasizes continuous improvement.

Other tools include the OWASP Top 10 for LLMs, which is great for generative AI risks like prompt injections. And for a comprehensive database, check the MIT AI Risk Repository, which catalogs over 1,700 risks from various sources.

Choosing the right framework depends on your industry and location. If you’re in Europe, start with the EU AI Act. For US-based firms, NIST is a go-to. Combine them for the best results.

External link: Explore the EU AI Act details to see how it applies to your business.

Image credit: eastgate-software.com

Step-by-Step Guide: How to Conduct an AI Risk Assessment

Now, the meat of it—how to actually do this. I’ll break it down into clear steps, with tips and examples.

Step 1: Assemble a Cross-Functional Team

You can’t do this alone. Bring together experts from different areas: IT, legal, compliance, ethics, and business leaders. Include someone from risk management if you have one.

Why? Each brings a unique perspective. IT knows the tech side, legal handles regulations, and business folks ensure it aligns with goals.

Start with a kickoff meeting. Define roles—maybe appoint a lead assessor. If your company is small, consider outsourcing to consultants specializing in AI risks.

Tip: Train your team on basic AI concepts. Resources like online courses from Coursera can help.

Internal link: See our post on building effective teams for tech projects.

Step 2: Inventory Your AI Systems

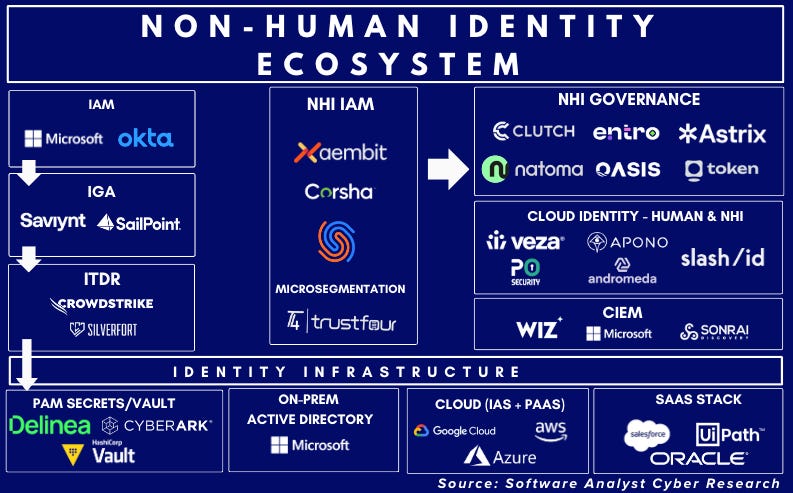

Know what you’ve got. Create a list of all AI tools and systems in use. This includes obvious ones like chatbots and hidden ones embedded in software from vendors.

Document details: What data do they use? What’s their purpose? Who developed them?

Use tools like spreadsheets or dedicated software for this. Check for “shadow AI”—employees using unapproved tools like personal ChatGPT accounts.

Example: A retail company might list their recommendation engine, inventory predictor, and customer service bot.

This step is crucial because you can’t assess what you don’t know about.

External link: For inventory templates, visit Red Clover Advisors’ guide.

Step 3: Identify Potential Risks

Dig into the dangers. Categorize them: technical (like model drift), ethical (bias), legal (compliance), security (hacking), and operational (reliability).

Use your framework to guide this. For NIST, map risks across the AI lifecycle—from design to deployment.

Brainstorm with your team. Ask: What could go wrong? How might this affect stakeholders?

Common risks in 2026 include generative AI hallucinations, where models output false info, and supply chain vulnerabilities in third-party AI.

Document everything, even low-probability ones.

For deeper insights, read about top AI risks from Clarifai.

Step 4: Evaluate and Prioritize Risks

Not all risks are equal. Assess likelihood and impact. Use a matrix: high likelihood/high impact gets top priority.

Factors: How severe is the harm? Financial loss? Reputational damage?

Score them quantitatively if possible, like on a 1-5 scale.

Prioritize based on your company’s risk tolerance. High-risk AI under EU Act? Bump it up.

This step helps focus resources where they matter most.

Internal link: Related reading on prioritizing business risks.

Step 5: Develop Mitigation Strategies

For each priority risk, plan how to handle it. Avoid, reduce, transfer, or accept?

Strategies: For bias, use diverse datasets and audits. For security, implement encryption and access controls.

Set controls like regular testing or human oversight for critical decisions.

Document mitigation plans with timelines and owners.

Example: If data poisoning is a risk, train on verified sources and use anomaly detection.

Tools like Lumenova AI can automate some of this.

External link: Check Splunk’s AI risk management insights.

Step 6: Implement, Monitor, and Review

Put plans into action. Integrate into daily operations.

Set up monitoring: Use dashboards for real-time checks on AI performance.

Review annually or after changes, like new regulations.

Conduct audits and update assessments as needed.

In 2026, continuous monitoring is key due to fast-evolving AI.

Tip: Use AI governance platforms like OneTrust for tracking.

This closes the loop, making your assessment ongoing.

Tools and Resources for AI Risk Assessment

You don’t need to reinvent the wheel. Here are some top tools:

- NIST AI RMF Playbook: Free, with templates.

- Holistic AI: For compliance tracking.

- Credo AI: Automates governance.

- Calypso AI: Focuses on data leaks in GenAI.

- AccuKnox AI-SPM: Zero-trust security.

Also, software like Superblocks for deployment with controls.

For free resources, the MIT AI Risk Repository is invaluable.

External link: Explore top AI risk tools from People Managing People.

Real-World Examples and Case Studies

Let’s see this in action. A fintech company in 2025 faced bias in their loan approval AI. After conducting an AI risk assessment using NIST, they retrained models with balanced data, reducing rejection disparities by 40%.

Another example: A healthcare firm under EU AI Act identified high-risk diagnostic tools. They added human review loops, avoiding fines.

In India, a manufacturing business used ISO 42001 to assess predictive maintenance AI, spotting cybersecurity gaps and preventing downtime.

These show that proper assessments pay off.

For more cases, read Baker Donelson’s AI forecast.

Common Challenges and How to Overcome Them

Challenge 1: Lack of expertise. Solution: Partner with consultants or use training programs.

Challenge 2: Resource constraints. Start small, focus on high-risk areas.

Challenge 3: Keeping up with regulations. Subscribe to updates from sources like the International AI Safety Report.

Challenge 4: Resistance from teams. Communicate benefits clearly.

By addressing these, you’ll smooth the process.

Internal link: Tips on overcoming tech adoption hurdles.

Conclusion: Take Action on Your AI Risk Assessment Today

Conducting an AI risk assessment in 2026 is about more than checking boxes—it’s about future-proofing your company. By following these steps, using solid frameworks, and leveraging tools, you can navigate AI’s complexities safely.

Start with your team and inventory, and build from there. The effort now will save headaches later.

If you’re ready to dive deeper, explore our advanced AI strategies post.

Remember, AI is powerful, but managed right, it’s a game-changer.

Share this content:

Post Comment