AI-Enabled Deepfake Social Engineering

In today’s digital world, where videos and audio clips spread like wildfire on social media, there’s a growing threat that blends artificial intelligence with old-school trickery. This is what we call AI-enabled deepfake social engineering. It’s when bad actors use AI to create fake videos, voices, or images that look and sound incredibly real, all to manipulate people into giving away sensitive information or making poor decisions. Think of it as phishing on steroids, where instead of a sketchy email, you get a video call from your “boss” asking for urgent wire transfers. As these tricks get smarter, so do the ways to spot them. That’s where multimodal detection strategies come in – combining checks on video, audio, and even behavior to catch fakes before they cause harm.

Deepfakes aren’t new, but AI has made them easier to produce and harder to detect. Social engineering, the art of exploiting human trust, has been around forever – remember those phone scams pretending to be from the bank? Now, mix the two, and you’ve got a recipe for chaos in businesses, politics, and everyday life. This post dives into how these threats work, real examples, the dangers they pose, and most importantly, strategies to detect them using multiple angles. Whether you’re a business owner worried about scams or just someone scrolling through feeds, understanding this can help you stay safe.

Image credit: HKCert.org – Illustration showing multiple faces in a grid, representing deepfake face swaps.

What Are Deepfakes and How Do They Work?

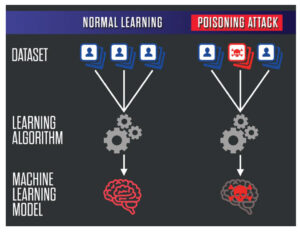

Deepfakes are synthetic media created using deep learning, a type of AI that mimics how our brains process information. The term “deepfake” comes from “deep learning” and “fake.” Basically, algorithms like Generative Adversarial Networks (GANs) train on tons of real images or audio to generate new content that looks authentic. For example, you feed the AI photos of two people, and it swaps their faces seamlessly in a video.

At its core, deepfake tech involves training models on datasets of faces, voices, or bodies. One network generates the fake, while another critiques it until the output fools even experts. Tools like DeepFaceLab or even apps on your phone can do this now, making it accessible to anyone with a computer. But when tied to social engineering, it’s not just fun filters – it’s a tool for deception.

For more on the basics of deepfakes, check out our internal guide on understanding deepfake technology. It’s a great starting point if you’re new to this.

External resources like Fortinet’s glossary explain it well: they describe how deepfakes use AI to manipulate media for scams, hoaxes, and more. You can read their full breakdown here (dofollow link).

The Basics of Social Engineering

Social engineering is all about psychology. Cybercriminals don’t always hack systems; they hack people. They build trust, create urgency, or exploit fear to get what they want – like passwords, money, or access. Common tactics include phishing emails, pretexting (making up stories), or baiting with free offers.

In the AI era, this gets amplified. Imagine getting a voice message from a family member in distress, asking for cash. But it’s not them – it’s a deepfake voice cloned from social media clips. This is where AI-enabled deepfake social engineering shines for attackers. It’s personal, convincing, and scales easily.

CrowdStrike notes that AI makes these attacks faster and more targeted, using tools like natural language processing to craft perfect messages. Their article on AI-powered social engineering is a must-read for defenses (dofollow).

How AI-Enabled Deepfake Social Engineering Combines the Two

When deepfakes meet social engineering, it’s a perfect storm. Attackers use AI to create hyper-realistic fakes tailored to victims. For instance, they scrape your LinkedIn for details, then generate a video of your CEO authorizing a payment. This isn’t hypothetical – it’s happening.

The process: First, gather data from public sources. Then, use AI to clone voices or faces. Finally, deploy in a social engineering scheme, like a fake video call. IBM’s insights show AI can craft phishing in minutes, not hours, and add deepfakes for credibility. See their take on generative AI in social engineering (dofollow).

This blend targets businesses especially, where quick decisions can lead to big losses. But individuals aren’t safe either – think election manipulation or personal blackmail.

Image credit: Fortinet – Diagram outlining various uses of deepfakes, from scams to disinformation.

Real-World Examples of AI-Enabled Deepfake Social Engineering

Let’s look at some cases that hit the headlines. In 2024, a finance worker at Arup transferred $25 million after a deepfake video call with fake executives. The voices and faces were spot-on, cloned from real meetings. Adaptive Security covers this in their blog on AI threats (dofollow).

Another: Scammers used AI to clone a CEO’s voice at WPP, tricking employees into sharing info. The Guardian reported it as a wake-up call for voice verification.

In politics, deepfakes have swayed opinions. Fake videos of leaders saying inflammatory things spread on social media, causing unrest. The FBI warns of AI in phishing, including voice cloning. Their alert is eye-opening (dofollow).

These examples show how AI makes old tricks new and deadly. For tips on spotting phishing, see our internal post on phishing prevention basics.

The Risks and Impacts of These Threats

The dangers go beyond money. Financial losses are huge – Deloitte estimates deepfake fraud could cost billions in banking alone. But there’s also reputational damage, like when a fake video ruins a company’s image.

On a societal level, deepfakes erode trust. If you can’t believe what you see or hear, how do you know what’s real? This fuels misinformation, especially in elections or crises. The World Economic Forum highlights how deepfakes threaten trust, with fraud up due to AI. Their story discusses safeguards (dofollow).

For individuals, it’s identity theft or extortion. Celebrities face fake porn, but anyone can be targeted. Businesses risk data breaches from tricked employees.

Group-IB lists use cases like AI scam calls and deepfakes in cybercrime. Check their blog for more (dofollow).

Multimodal Detection Strategies: The Key to Fighting Back

Now, the good part: detecting these fakes. Multimodal means using multiple data types – video, audio, text – together. Why? Single checks, like just looking at faces, can be fooled. But combining them catches inconsistencies.

Visual Detection Methods

Start with the eyes. Deepfakes often mess up blinks, lighting, or skin textures. AI tools analyze pixels for artifacts, like unnatural shadows or blending errors.

Techniques include CNNs (Convolutional Neural Networks) that spot face swaps. Arxiv’s paper on multimodal frameworks uses visual models like VGG19 for 94% accuracy. Their research details it (dofollow).

Also, check for spatiotemporal inconsistencies – does the mouth match the words over time?

Audio Detection Approaches

Voices give clues too. Deepfakes might have weird pitches or breaths. Spectrogram analysis spots synthetic patterns.

MDPI’s study on robust multimodal detection combines audio with video for better results. Read about it here (dofollow).

Tools use MFCCs (Mel-Frequency Cepstral Coefficients) to compare real vs. fake sounds.

Text and Behavioral Analysis

Don’t forget context. Does the message make sense? AI might generate odd phrasing. Behavioral checks look for anomalies, like unusual request timing.

Fortinet suggests AI analytics for tone variations in emails.

In videos, check if emotions match words – a happy face with sad voice is suspicious.

Fusion Techniques in Multimodal Detection

The magic is fusing modalities. Late fusion combines scores from each; early fusion merges features early.

ScienceDirect’s review categorizes single vs. multi-modal, showing fusion improves accuracy. Their article is detailed (dofollow).

Unsupervised methods spot intra- and cross-modal inconsistencies, per BMVA’s paper.

For emotion-based detection, UMD’s work uses affective cues.

Image credit: Speedinvest – Market map of deepfake detection tools and incumbents.

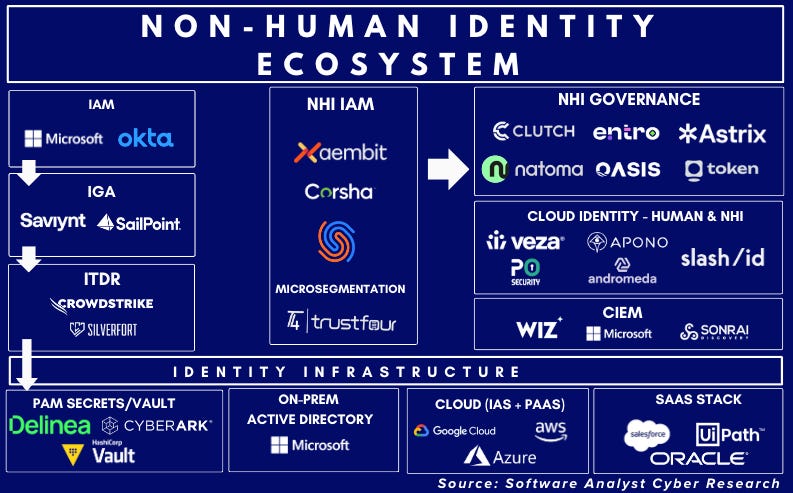

Tools and Technologies for Detection

Plenty of tools out there. Reality Defender offers real-time checks with 94-96% accuracy.

Clarity AI and Get Real Labs detect edits in images/voices.

Big names like Microsoft and Intel invest in this. DARPA’s programs fund research.

Open-source options: GitHub’s MultiModal-DeepFake repo for DGM4 tasks. Try it here (dofollow).

For businesses, CrowdStrike’s Falcon uses AI for anomaly detection.

Check our internal review of top AI security tools.

Prevention Tips for Individuals and Businesses

Detection is great, but prevention is better. Train staff on red flags – unusual requests, verify via another channel.

Use multi-factor authentication beyond passwords; behavioral biometrics help.

For calls, establish safe words or callback procedures.

Checkpoint recommends email security and training. Their guide has tips (dofollow).

Individuals: Limit social media shares, use watermarking tools for your content.

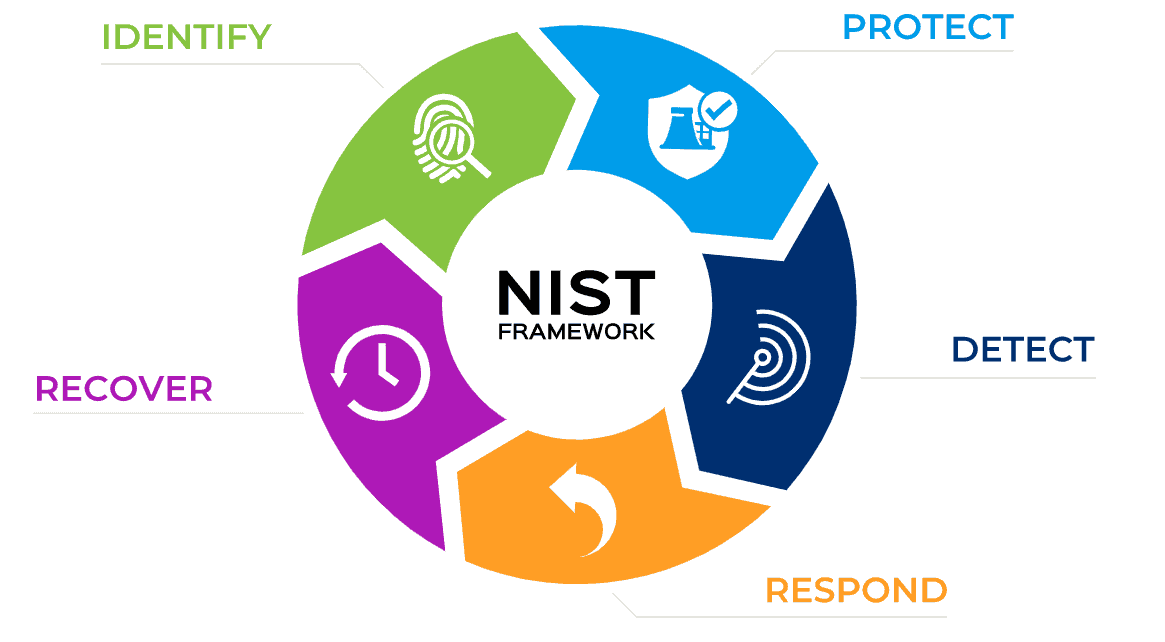

NIST’s new standards guide protections against deepfakes. Netarx’s blog explains (dofollow).

Future Trends in AI-Enabled Deepfake Social Engineering and Detection

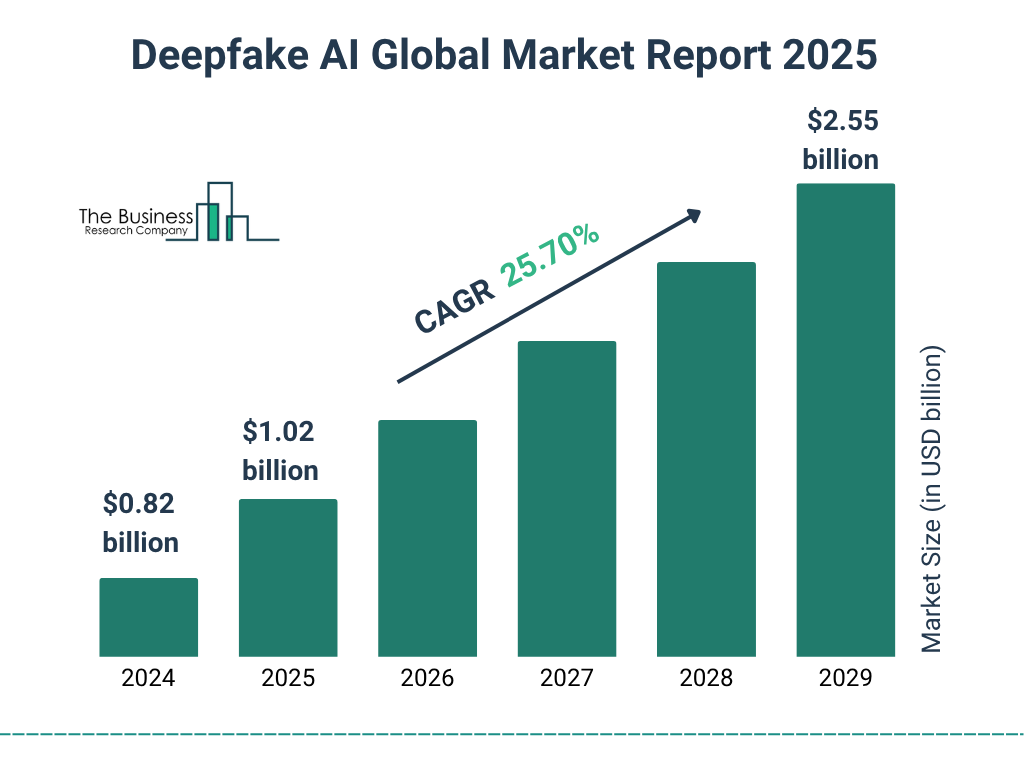

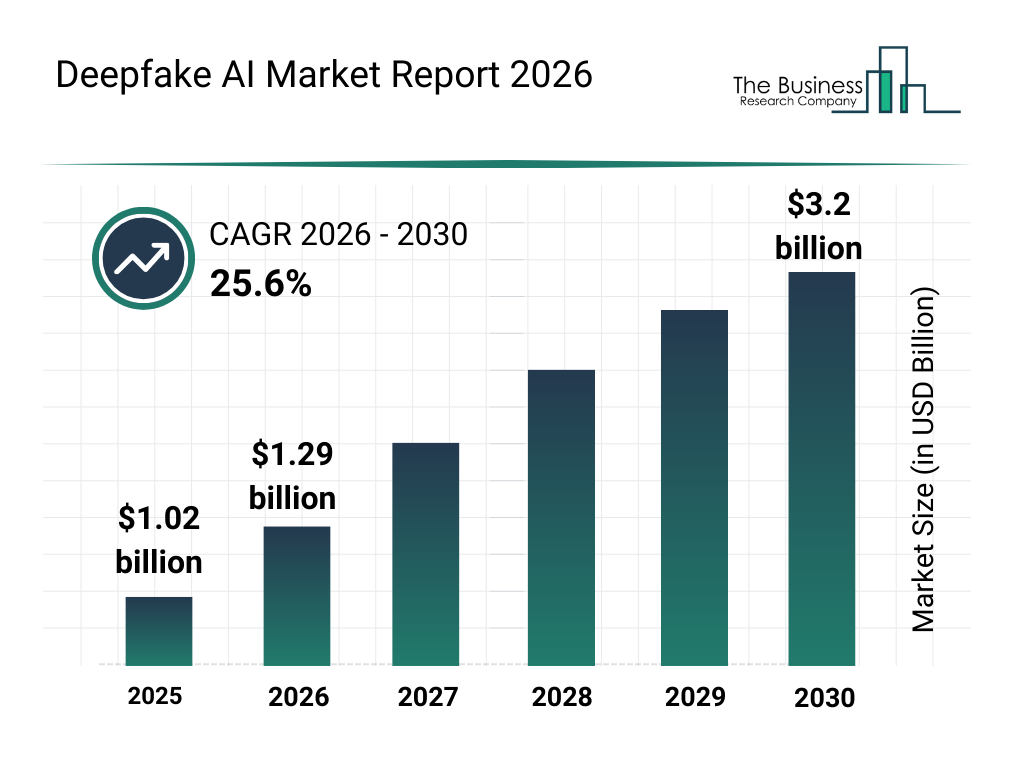

The market for deepfake AI is booming – expected to hit $3.2 billion by 2030, per The Business Research Company. No, wait, that’s for the market report.

Trends: More advanced GANs and diffusion models for creation, but also better transformers for detection.

ACM’s overview predicts multimodal fusion as key.

AI agents might automate attacks, but defenders use AI too – like Arctic Wolf’s anomaly detection. Their post looks ahead (dofollow).

Challenges: Racial bias in datasets, per PMC’s survey. Read it (dofollow).

Image credit: The Business Research Company – Bar graph showing deepfake AI market growth from 2025 to 2030.

Wrapping It Up

AI-enabled deepfake social engineering is a serious issue, blending cutting-edge tech with human vulnerabilities. But with multimodal detection strategies – checking visuals, audio, and behavior together – we can fight back effectively. Stay informed, use the tools, and train yourself and your team. The future might bring more sophisticated threats, but detection tech is evolving just as fast.

For more on cybersecurity, explore our cybersecurity essentials section. And remember, if something seems off, double-check – it could save you a lot.

Share this content:

Post Comment