Top 10 AI Governance Best Practices for Businesses 2026

In 2026, artificial intelligence sits at the heart of how companies operate — from customer service chatbots to supply chain predictions and hiring tools. But with great power comes real responsibility. Without solid rules in place, businesses face bias in decisions, data leaks, sudden regulatory fines, and loss of customer trust. That’s exactly why Top 10 AI Governance Best Practices matter more than ever this year.

The EU AI Act becomes fully enforceable in August 2026 for high-risk systems. Agentic AI that can act on its own is spreading fast. Companies that treat governance as an afterthought risk falling behind. Those that build it in from day one gain trust, speed up safe innovation, and protect their bottom line.

This post walks you through the Top 10 AI Governance Best Practices in simple, actionable steps. Whether you run a small manufacturing firm in India or a global tech company, these practices help you use AI responsibly while staying ahead of risks. Let’s explore them one by one so you can start applying them right away.

Image credit: Artificial Intelligence & the Future of Work via Columbia University Center for Sustainable Development

Why AI Governance Matters So Much in 2026

Before we jump into the list, understand the big picture. Fewer than one in 100 organizations have fully implemented responsible AI practices, according to the World Economic Forum. That gap creates real problems — unexpected model failures, regulatory headaches, and damaged reputation.

In 2026, AI isn’t just a tool anymore. It’s making autonomous decisions that affect people’s lives and your business results. Strong governance turns AI from a potential liability into a trusted competitive advantage. It helps you spot risks early, meet laws like the EU AI Act, and build systems that people actually trust.

Businesses following these Top 10 AI Governance Best Practices report higher employee confidence, fewer compliance issues, and faster AI rollout without nasty surprises. Ready to join them? Here are the practices that deliver results.

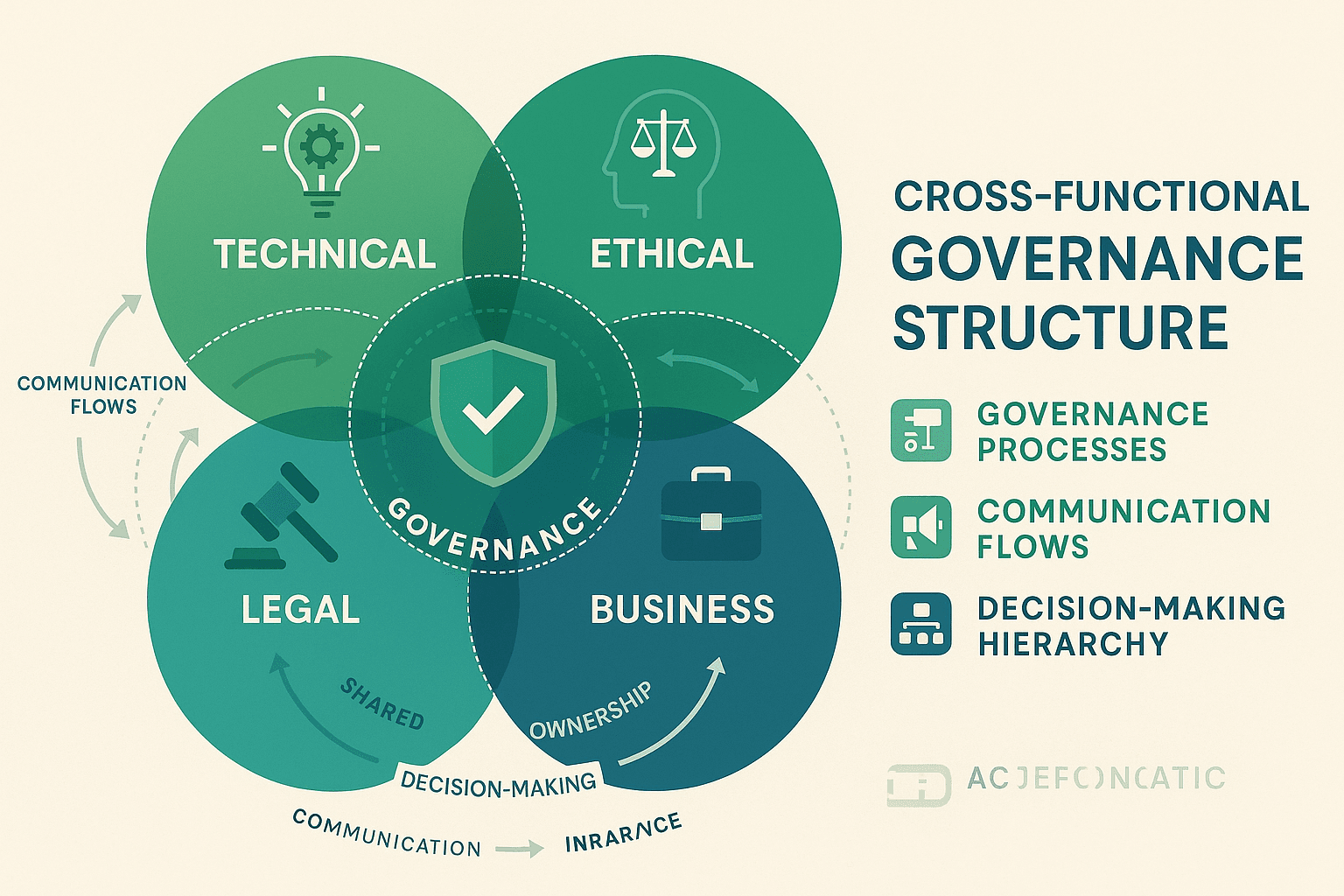

1. Establish a Cross-Functional AI Governance Committee

Start with people. A single department cannot handle AI risks alone. You need voices from legal, IT, ethics, data science, business units, and even HR sitting together regularly.

This committee reviews new AI projects, sets policies, and has real power to say “no” or “fix this” before deployment. In 2026, with agentic AI making independent choices, you cannot afford siloed decisions.

How to implement it step by step:

- Pick a senior sponsor — ideally a C-level executive.

- Include 8-12 members with clear roles.

- Meet bi-weekly, not just quarterly.

- Create a simple charter that spells out decision rights and escalation paths.

- Document every meeting and share summaries company-wide.

A mid-sized European retailer did exactly this last year. Their committee caught a hiring algorithm that unfairly screened candidates from certain regions. They fixed it before launch and avoided a potential lawsuit.

The result? Faster approvals for safe projects and zero major incidents. This practice sits at the core of the Top 10 AI Governance Best Practices because everything else flows from having the right team in place.

Image credit: Cross-Functional Collaboration in AI Governance via VerityAI

Learn more about building effective teams from the NIST AI Risk Management Framework — a free resource that many committees use as their starting guide: NIST AI RMF.

2. Define Clear AI Ethics Principles Aligned with Business Values

Write down what “good AI” means for your company. Create 5-7 simple principles like fairness, transparency, privacy, and human oversight. Make them specific to your industry and values.

In 2026, customers and investors check these principles before buying or investing. Vague statements no longer work.

Practical steps:

- Form a small working group to draft the principles.

- Run workshops with employees to gather input.

- Publish them on your website and intranet.

- Link every AI project to at least two principles in the approval form.

- Review and update them annually.

A bank in Asia used this approach. Their principle “AI must never discriminate based on protected characteristics” helped them redesign a loan approval model that previously showed bias. Customers noticed the fairer process and gave higher satisfaction scores.

This practice builds the moral compass your AI systems need. It turns abstract ethics into daily decisions and forms a foundation for all other Top 10 AI Governance Best Practices.

Image credit: Key Principles of AI Ethics via Dreamstime

3. Implement Thorough Risk Assessment and Classification Processes

Not every AI tool carries the same risk. A chatbot answering FAQs is low risk. An AI deciding who gets a loan is high risk. Classify every use case and apply the right level of scrutiny.

The EU AI Act uses exactly this risk-based approach, and smart companies follow it globally for consistency.

Easy workflow to follow:

- Create a short intake form for every new AI idea.

- Score impact on people, finances, and reputation.

- Assign risk level: minimal, limited, high, or unacceptable.

- For high-risk projects, require extra testing and human review.

- Keep a central log of all classified systems.

One logistics company classified their route optimization AI as medium risk but their driver scheduling tool as high risk because it affected livelihoods. They added extra fairness checks to the scheduling tool and slept better at night.

This practice prevents small problems from becoming big headlines and saves time by focusing effort where it matters most.

Image credit: AI Risk Management Workflow via Medium

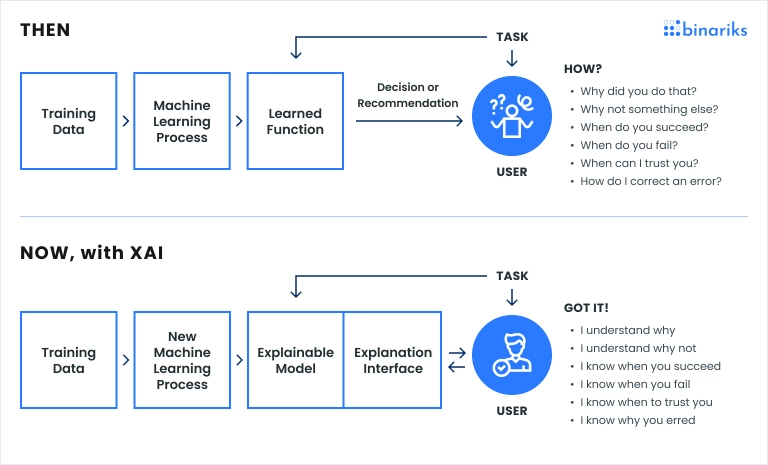

4. Ensure Full Transparency and Explainability in AI Decisions

People want to know why the AI made a particular choice. Black-box systems are no longer acceptable in 2026.

Build explainability into your models from the start. For high-stakes decisions, provide clear reasons in plain language.

How to make it happen:

- Choose models that support explainable techniques whenever possible.

- Document data sources, training methods, and limitations.

- Create user-friendly explanation interfaces for customers and employees.

- Test explanations with real users to ensure they make sense.

A healthcare provider now shows doctors why the AI flagged a patient for extra tests. Doctors trust the system more, and patients feel respected. This practice reduces complaints and builds long-term loyalty.

Image credit: Explainable AI Diagram via Binariks

External resource: The Partnership on AI offers excellent guidance on transparency for businesses — check their 2026 priorities here: Partnership on AI.

5. Set Up Continuous Monitoring and Post-Deployment Auditing

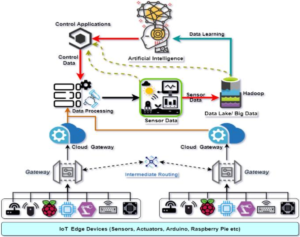

AI systems change over time. Data drifts, new attacks appear, and performance can drop. You need eyes on your models 24/7.

In 2026, “set it and forget it” is the fastest way to fail.

Build a monitoring system:

- Track accuracy, fairness metrics, and data quality daily.

- Set automatic alerts for anything outside normal ranges.

- Conduct quarterly independent audits.

- Have a clear plan for when to roll back or retrain a model.

A retail chain monitors its recommendation engine in real time. When it noticed recommendations becoming less diverse for certain customer groups, they retrained the model within days and kept sales strong.

This practice keeps your AI reliable long after launch and is one of the most practical Top 10 AI Governance Best Practices for day-to-day operations.

Image credit: AI Model Monitoring Dashboard via Evidently AI

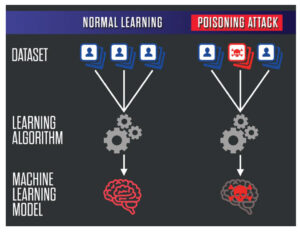

6. Strengthen Data Privacy, Security, and Quality Management

Garbage in, garbage out still applies — but now with bigger privacy risks. Clean, secure, and properly consented data is non-negotiable.

Key actions:

- Map all data used by AI systems.

- Apply strict access controls and encryption.

- Run regular quality checks for bias and completeness.

- Update privacy notices to cover AI use clearly.

Companies following GDPR and preparing for stronger 2026 rules stay ahead. A manufacturing firm discovered old training data contained sensitive customer information they had forgotten about. They cleaned it up and avoided a potential breach.

This practice protects your customers and your reputation at the same time.

Image credit: Data Privacy and Security for AI via DataCenterNews

7. Invest in Comprehensive Training and Build a Responsible AI Culture

Tools and committees matter, but people make the real difference. Everyone who touches AI needs to understand governance basics.

Make training practical:

- Offer short, role-specific modules (developers get technical, managers get oversight focus).

- Run monthly “AI ethics in action” discussions.

- Recognize teams that spot risks early.

- Include governance questions in performance reviews.

A global software company saw a 40% drop in policy violations after making training mandatory and fun. Employees started reporting shadow AI use voluntarily because they understood the “why.”

Culture eats policy for breakfast — this practice ensures governance actually sticks.

Image credit: AI Training Workshop via Loyola Marymount University

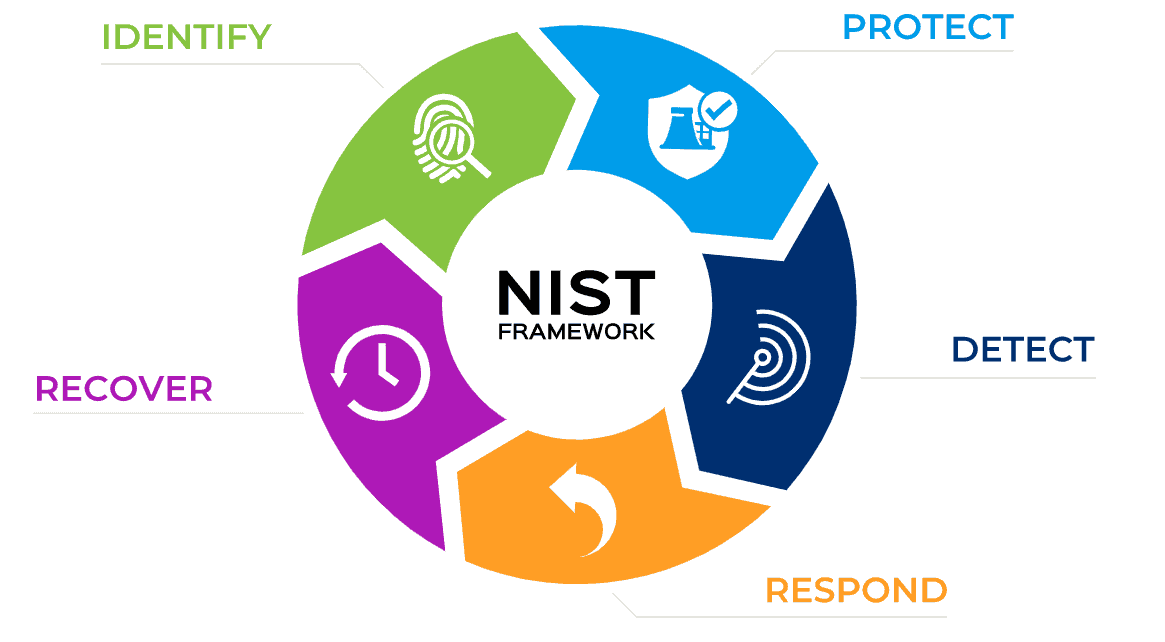

8. Achieve Compliance with Key Regulations and International Standards

2026 brings the full weight of the EU AI Act plus evolving rules in the US, UK, and elsewhere. Treat compliance as a baseline, not the ceiling.

Smart approach:

- Map your systems against the strictest requirements (often the EU standard).

- Use frameworks like NIST AI RMF and ISO 42001 as your backbone.

- Keep a living compliance dashboard.

- Prepare for third-party audits.

Businesses that aligned early report smoother operations and even win new contracts because customers trust their compliance.

Official EU AI Act resource for the latest timelines and obligations: European Commission AI Act page.

9. Engage Stakeholders for Inclusive and Accountable Governance

Talk to customers, employees, partners, and communities affected by your AI. Their feedback makes your governance stronger and more legitimate.

Simple ways to engage:

- Run annual AI impact surveys.

- Create an external advisory board.

- Publish transparency reports with real metrics.

- Hold public forums on major AI initiatives.

A utility company in Europe involved local communities when deploying AI for energy distribution. The result was higher acceptance and fewer complaints during outages.

This practice turns potential critics into supporters.

.jpg)

Image credit: AI Stakeholder Engagement via CERTA Foundation

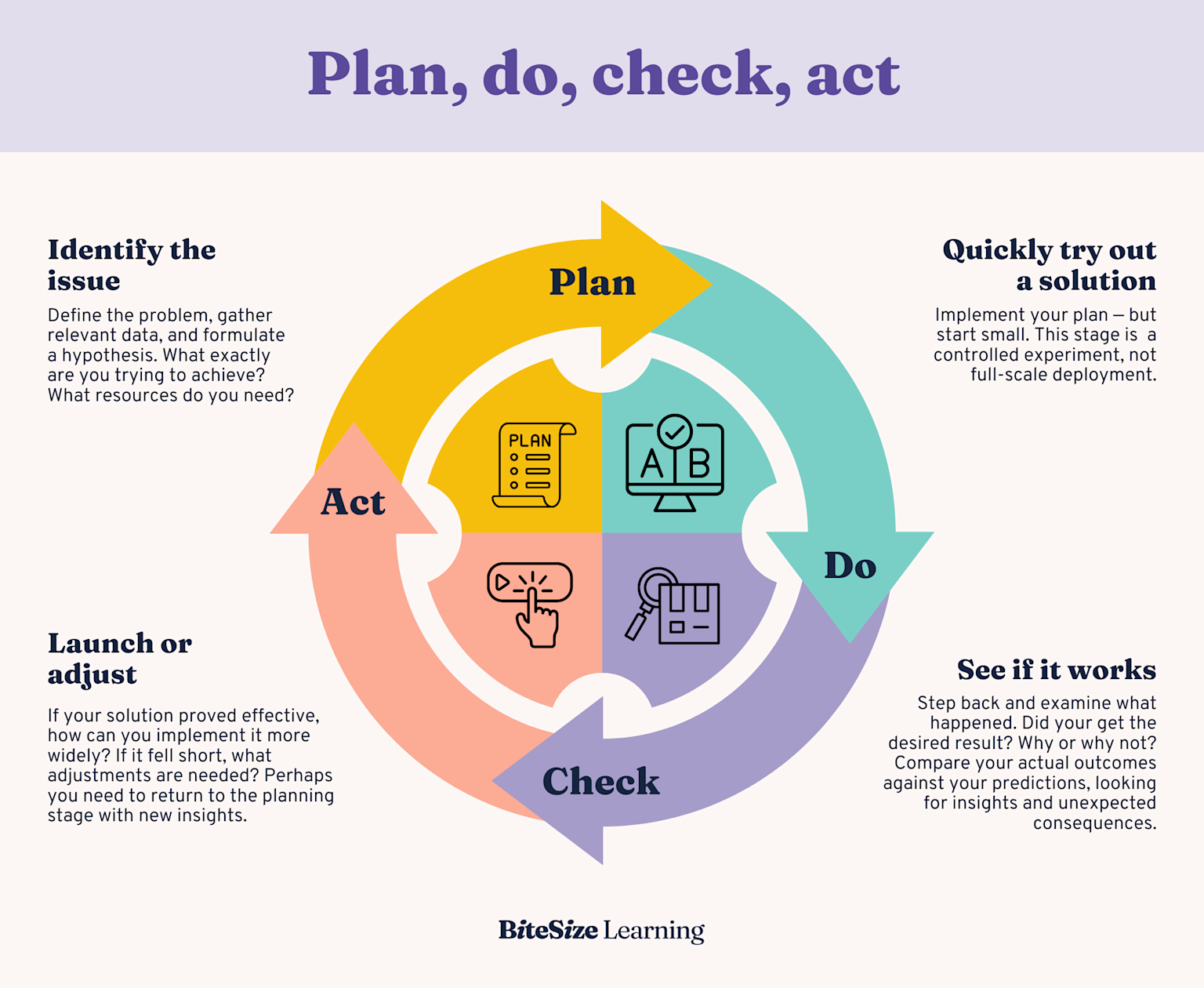

10. Commit to Continuous Improvement and Adaptation for Future-Proofing

AI governance is never “done.” New capabilities like advanced agents arrive every few months. Build a system that learns and evolves.

Make improvement routine:

- Schedule yearly governance maturity assessments.

- Use the PDCA (Plan-Do-Check-Act) cycle for your AI program.

- Pilot new tools and practices in controlled environments.

- Stay connected to industry groups and regulators.

Companies following this practice treat governance as a living program, not a one-time project. They adapt faster and capture more value from AI over time.

Image credit: PDCA Cycle for AI via Medium

How to Get Started with These Top 10 AI Governance Best Practices Today

Pick three practices that match your biggest current pain points. Maybe start with the committee and risk classification — they give quick wins. Assign owners, set 90-day milestones, and measure progress with simple KPIs like “percentage of AI projects reviewed” or “number of risks mitigated.”

Many businesses also use helpful platforms that automate parts of monitoring and documentation. The key is consistent action, not perfection on day one.

Real Business Benefits You Can Expect

Companies that follow these Top 10 AI Governance Best Practices see:

- Up to 50% fewer compliance incidents

- Higher customer trust scores

- Faster approval times for safe AI projects

- Better talent attraction because people want to work for responsible organizations

- Protection from fines that can reach millions or even percentage of global turnover under the EU AI Act

Governance is not a cost center — it’s an investment that pays back through sustainable growth.

Conclusion: Make 2026 Your Year of Responsible AI Leadership

The businesses that thrive with AI in 2026 won’t be the ones with the fanciest models. They’ll be the ones with the strongest governance foundations. By putting these Top 10 AI Governance Best Practices into action, you protect your company, respect your customers, and unlock AI’s full positive potential.

Start small, stay consistent, and keep learning. The future belongs to organizations that use AI not just intelligently, but responsibly.

Which of these practices will you tackle first? Drop a comment below or share this guide with your team. For more insights on building trustworthy AI, explore our related articles on data privacy strategies for AI and emerging AI regulations in Asia.

Image credit for compliance visual: EU AI Act Compliance via VerityAI

Thank you for reading. Here’s to safer, smarter AI in 2026 and beyond!

FAQ: Top 10 AI Governance Best Practices for Businesses 2026

What exactly is AI governance? AI governance is the set of rules, processes, and people that ensure your artificial intelligence systems are developed and used safely, fairly, and legally. Think of it as the guardrails that keep AI on the right path.

Do small businesses need to follow all 10 practices? Yes, but start simple. A three-person team can still create basic principles, classify risks, and monitor key systems. Scale up as you grow.

How does the EU AI Act affect companies outside Europe? If you serve European customers or operate there, you must comply. Many global firms use EU standards everywhere for simplicity and to build extra trust.

What is the biggest risk if we ignore governance? Regulatory fines, loss of customer trust, biased decisions that lead to lawsuits, and AI systems that fail unexpectedly and damage your brand.

How long does it take to implement these practices? Most companies see meaningful progress in 3-6 months. Full maturity usually takes 12-18 months with steady effort.

Can we use free resources to get started? Absolutely. The NIST AI RMF, Partnership on AI materials, and EU guidelines are all free and excellent starting points.

What role does the board of directors play? Boards should receive regular updates on AI risks and governance effectiveness. In 2026, oversight is becoming a key governance duty.

How do we measure success of our AI governance program? Track metrics like percentage of AI projects that pass risk review, number of incidents, employee training completion rates, and stakeholder trust scores.

Is there a difference between AI governance and AI ethics? Ethics focuses on “doing the right thing.” Governance provides the structures and processes to make ethics happen consistently.

Where can I learn more about agentic AI risks? The Partnership on AI’s 2026 priorities document has a dedicated section on governing AI agents safely.

Share this content:

Post Comment